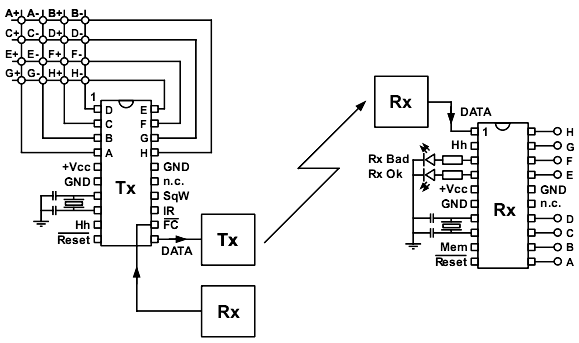

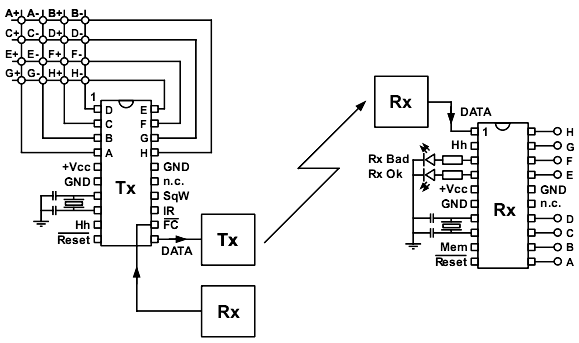

This article is about a pair of microcontrollers programmed so that reliable communication between them can be established. Two target applications are remote controlling of electronic devices and simoultaneous remote monitoring of several on/off type sensors. Both of these tasks can be carried out using various kinds of physical media such as copper wires, IR light, ultrasound or even radio waves.

Project description

The protocol that enables data transfer utilises a kind of forward error correction or FEC technique. Such coding assumes that the transmitting unit doesn't send bare data bits from its input into the transmission media, but it instead performs specific matemathical transformations on data in order to make them more rugged prior to sending them. This enables the receiving chip to recover uncorrupted original data from partially corrupted stream of received code bits.

Bit damage regularly occurs if conditions are not ideal for data transfer - in noisy media, in media that rapidly change their basic properties (for example, loosen or corroded copper wires), if there are active transmitters nearby etc. Give it a taught for a moment and it becomes clear that practically all real world media are in fact both rather noisy and also plagued with constantly growing range of interferences. The particular FEC algorithm used deals with the so called "physical layer" of data transmission. It is capable of recovering original bits of data even if 1/8 of all transmitted bits were corrupted. It is also able to detect situations in which more bits were corrupted than can be corrected, in which case it blocks any further attempts of interpreting the meaning of the message by higher level routines in the receiver as it might otherwise lead to executing false commands. In abundance of remote controll protocols of today, FEC utilisation makes this one a very rare gem.

Data transmission protocol used here implements not only bare FEC but also higher levels of data evaluation in receiver before issuing actual commands. This is done by adding a small overhead data in the form of a checksum to the data stream prior to transmission, which then can be used in the receiving unit for unique identification of valid commands. This absolutely prevents execution of false commands by the receiver... As it is well known, when dealing with noise, the theory of random processes is in rule which makes virtually everything possible given enough time, but in practice no false command execution has ever been detected over

sagans of microseconds the DUT devices spent under a range of horrificly brutal tests.

To sum the above up, the implemented protocol enables optimal utilisation of noisy data transmission channles while at the same time it securely prevents triggering of false commands. In practice, this means that the transmitter is able to use minimal power and save precious batteries as the emitted signal doesn't really need to be orders of magnitudes stronger than the surrounding noise. Or, the receiver electronics need not be of extreme complexity and quality as receiving algorithm efficiently discards all sorts of signal imperfections; for example, it is sufficient to use simple one-transistor superregenerative radio receivers that operate at microwatts of power instead of much more complex and power hungry topologies. But even with signals so weak and distorted that they are on the verge of reception, false positive identification of commands can never ocur in the receiver.